Week Overview

| Time | Monday | Tuesday | Wednesday | Thursday | Friday |

|---|---|---|---|---|---|

| 09:00 09:15 |

FF |

FF |

FF |

||

| 09:15 09:30 |

FF |

||||

| 09:30 10:30 | Keynote 1 | Keynote 2 | Keynote 5 | Keynote 6 | |

| 10:30 10:45 | |||||

| 10:45 11:00 | |||||

| 11:00 12:15 | |||||

| 12:15 12:30 | |||||

| 12:30 12:45 |

She-Lunch |

Best Paper Jury |

|||

| 12:45 13:00 |

Closing Session |

||||

| 13:00 13:15 | |||||

| 13:15 13:45 | |||||

| 13:45 14:00 | |||||

| 14:00 14:45 | |||||

| 14:45 15:00 | |||||

| 15:00 15:30 | |||||

| 15:30 16:00 | Poster Q&A | ||||

| 16:00 16:30 | |||||

| 16:30 17:00 | |||||

| 17:00 17:30 |

Opening Session |

||||

| 17:30 17:45 | |||||

| 17:45 18:00 |

EG General Assembly |

||||

| 18:00 18:45 | |||||

| 18:45 19:00 | |||||

| 19:00 19:30 |

Social Event |

Fellows' Dinner |

IPC Dinner |

||

| 19:30 21:00 |

Public Lecture |

||||

| 21:00 22:00 | |||||

| 22:00 22:30 |

Daily Program

Full Program

Full Paper 1

Animating Humans with Gestures and Style

Session chair: Marc Habermann

Full Paper 2

Diffusion and Beyond: Controlled Image Generation and Stylization

Session chair: Amit Bermano

Full Paper 3

Structured for Speed: Spatial Representations for Real-Time Rendering

Session chair: Michael Guthe

-

Real-Time Rendering of Dynamic Line Sets using Voxel Ray Tracing

-

Encoding Occupancy in Memory Location for Efficient and Compact High-Resolution Voxel Structures (CGF paper)

-

NePO: Neural Point Octrees for Large-scale Novel View Synthesis (CGF paper)

-

NAADF: Globally Illuminated Voxel Worlds Accelerated with Nested Axis-Aligned Distance Fields

Full Paper 4

Covering the Surface: Texture Synthesis, Patterns, and Compression

Session chair: Rafael Kuffner dos Anjos

-

Real-time by-example texture synthesis and filtering using local statistics exchange

-

Variable-Rate Texture Compression: Real-Time Rendering with JPEG

-

ProcTex: Consistent and Interactive Text-to-texture Synthesis for Part-based Procedural Models

-

Lightmap Compression with Color-Coherent UV Clustering and Cascade Texture Optimization

-

Controllable Intrinsic Surface Pattern Generation Using Slime Mold Simulations

Full Paper 5

Learning Surface and Scene Representations

Session chair: Ruben Wiersma

Full Paper 6

Go with the Flow: Fluid Simulation and Rendering

Session chair: Holger Theisel

Full Paper 7

Structural Geometry: From Fabrication to Fracture

Session chair: Daniel Zint

Full Paper 8

From Pixels to Scenes: 3D Reconstruction and Generation

Session chair: Linus Franke

-

GS-2M: Material-aware Gaussian Splatting for High-fidelity Mesh Reconstruction

-

Layer3D: A 3D Layered Representation for Multiview Vector Graphics

-

GeoFusionLRM: Geometry-Aware Self-Correction for Consistent 3D Reconstruction

-

UniCross3D: Unified Cross-View and Cross-Domain Diffusion for Consistent Single-Image 3D Generation

Full Paper 9

Motion in the Wild: From Individuals to Crowds

Session chair: Yiorgos Chrysanthou

-

Physics-Based Motion Tracking of Contact-Rich Interacting Characters

-

Step2Motion: Locomotion Reconstruction from Pressure Sensing Insoles

-

ContactVision: Learning Foot Contact from Video for Physically Plausible Gait Animation

-

Herds from Video: Learning a Microscopic Herd Model from Macroscopic Motion Data (CGF paper)

-

MPACT: Mesoscopic Profiling and Abstraction of Crowd Trajectories (CGF paper)

Full Paper 10

Light Transport: Sampling, Waves, and Denoising

Session chair: Junqiu Zhu

Full Paper 11

Hierarchical Geometry: Optimization and Simplification

Session chair: Renato Pajarola

Full Paper 12

Temporal Vision: Video Generation, Pose, and Narrative

Session chair: Marc Christie

-

Story2Board: A Training-Free Approach for Expressive Visual Storytelling

-

SAGE: Structure-Aware Generative Video Transitions between Diverse Clips

-

SEE4D: Pose-Free 4D Generation via Auto-Regressive Video Inpainting

-

Enhancing Robust Category-Agnostic Pose Estimation through Multi-Modal Feature Alignment

Full Paper 13

2D and Beyond: Stylized Animation and Reconstruction

Session chair: Nuria Pelechano

Full Paper 14

Solving Deformation: Numerical Methods for Elastic Simulation

Session chair: Mario Botsch

Full Paper 15

Digital Humans: From Capture to Control

Session chair: Sebastian Weiss

Full Paper 16

Measuring and Modeling Material Appearance

Session chair: Enrico Gobbetti

Full Paper 17

From Leaf to Planet: Natural Environment Generation and Simulation

Session chair: Oliver Deussen

Full Paper 18

Neural Appearance: Reflectance, Irradiance, and Light Transport

Session chair: Diego Gutierrez

Full Paper 19

Parametric and Structured Geometry

Session chair: Raphaëlle Chaine

Full Paper 20

Immersive and Interactive: Rendering Across Displays and Devices

Session chair: Karol Myszkowski

Full Paper 21

Maps and Meshes: Parameterization and Geometry Processing

Session chair: David Bommes

-

DiskScissors: Cutting Arbitrary-Topology Solids for Bijective Mapping

-

Adaptive Use of LBO Bases by Shape Feature Scales for High-Quality and Efficient Shape Correspondence (CGF paper)

-

Volume Quantization with Flexible Singularities for Hexahedral Meshing

-

Fast Injective Mesh Parameterization via Beltrami Coefficient Prolongation

Full Paper 22

Advancing 3D Gaussian Splatting

Session chair: Seungyong Lee

-

Splat-based Metal Artifact Reduction in Cone-Beam CT via Polychromatic Modeling

-

Multi-Spectral Gaussian Splatting with Neural Color Representation

-

RotGS: Rotation-Guided 3D Gaussian Splatting for Turntable Sequences without Structure-from-Motion

-

Adaptive Spatio-Temporal 3D Gaussian Splatting for Scenes with Oscillatory Motion

-

OUGS: Active View Selection via Object-aware Uncertainty Estimation in 3DGS

Short Paper 1

Faces, Characters & Human Modeling

Session chair: Aleksander Płocharski

Short Paper 2

Appearance, Imaging & Tools

Session chair: Xavier Chermain

Short Paper 3

Simulation, Geometry & Computational Design

Session chair: Ana Serrano

-

VisACD: Visibility-Based GPU-Accelerated Approximate Convex Decomposition

-

Tetrahedron-Tetrahedron Intersection and Volume Computation Using Neural Networks

-

ConJEB: A Large Elastic Contact Jet Engine Bracket Quadratic Program Dataset

-

What a Comfortable World: Ergonomic Principles Guided Apartment Layout Generation

-

Beyond Segmentation: Structurally Informed Facade Parsing from Imperfect Images

-

Differentiable Objectives for 3D Scene Relighting via Gradient Descent on OLAT Basis Coefficients

Short Paper 4

Rendering Representations & GPU Pipelines

Session chair: Markus Schütz

Tutorial 1

Simulation Methods for Multiphysics Phenomena in Visual Computing

Tutorial 2

A Hands-On Introduction to Discrete Differential Operators on Polygon Meshes

Tutorial 3

Deep Learning on Meshes and Point Clouds

Tutorial 4

Optimal Transport for Fluid Simulation New and Old

Tutorial 5

Fast Explicit 3D Reconstructions and How To Use Them

Tutorial 6

Effective User Studies in Computer Graphics: From Pixels to Perception

Tutorial 7

Convex Optimization in Computer Graphics

Tutorial 8

Introduction to Optimization Time Integration for Solids and Fluids

STAR 1

Magnetic Modeling and Simulation for Computer Graphics

STAR 2

Advances in Neural 3D Mesh Texturing: A Survey

STAR 3

Survey on differential estimators for 3D point clouds

STAR 4

Establishing Shape Correspondences: A Survey

STAR 5

How to Build Digital Humans?

STAR 6

Non-Rigid 3D Shape Correspondences: From Foundations to Open Challenges and Opportunities

Invited STARs - 45 min each

STAR 8

A Survey of Inter-Prediction Methods for Time-Varying Mesh Compression

STAR 9

State-of-the-art in deep learning approaches for single-panorama indoor modeling and exploration

Education 1

-

A Project-Based Approach to Teaching Game Engine Architecture

-

Remaking Games for Building Game Development and Programming Skills

-

(Easy + Hard)/ 2 = balanced advanced rendering assignments

Education 2

-

The Way of the Duck - A Road Towards Constructivism and Scaffolding Supported Computer Graphics Learning

-

A Comparison of Low-Barrier Support Approaches for Computer Graphics Assignments in LaTeX

-

An Interactive and Immersive Assignment for Teaching Matrix Composition in Secondary Education

Education 3

-

Hack More: Hackathon on Modelling and Rendering

-

GLSL Assignment on Perlin Noise

-

Come On Shader, Light My Tile: A Student Project Supporting Tile Normal Mapping Workflows in Unity

-

SpeakEase: A Virtual Reality Framework for Public Speaking

Keynote 1

The Quest for Easy Creation, Editing and Real-Time Rendering of Realistic 3D Scenes

Session chair: Michael Wimmer

Keynote 3

Towards Efficient World Models for Visual Intelligence

Session chair: Leif Kobbelt

Keynote 4

Shaping the future of our 3D immersion in digital worlds

Session chair: Ana Serrano

Poster Q & A

-

Video Rater: A Framework for Subjective Evaluation of Rendering Artifacts

-

Kollani: A Distributed Tool for Real-Time Collaborative Reviews of 3D Assets

-

Neural Approximation of Generalized Voronoi Diagrams

-

Dynamic Region Filling for Robotic Artistic Painting using Visual Feedback

-

Hybrid Contrast-Aware Fog Detection for Automotive Vision Systems

-

Semantic Weaponry: A Modular Approach to Text-to-3D Model Generation

-

Automating Makeup Appearance Acquisition via Inverse Rendering for Virtual Try-On

-

MBRCNet: Multi-view Breast Reconstruction and Classification Network

-

Deep Illumination–Guided Light Probe Placement

-

Compressing Double-Phase Holograms using 2D Gaussians

-

Real-Time Angular Color Shift Compensation for On-Set Virtual Production

-

Still2Scene: Hybrid Gaussian Environments for Virtual Production

-

Semi-Automatic View-Based Segmentation of Gaussian Splat Scenes

-

Decoupled Reprojection Consistency for Diagnosing 3D Gaussian Splatting Failures

-

Opacity-Based Occlusion Culling for 3D Gaussian Splatting

-

Smaller and Faster 3DGS via Post-Training Dictionary Learning

Keynotes

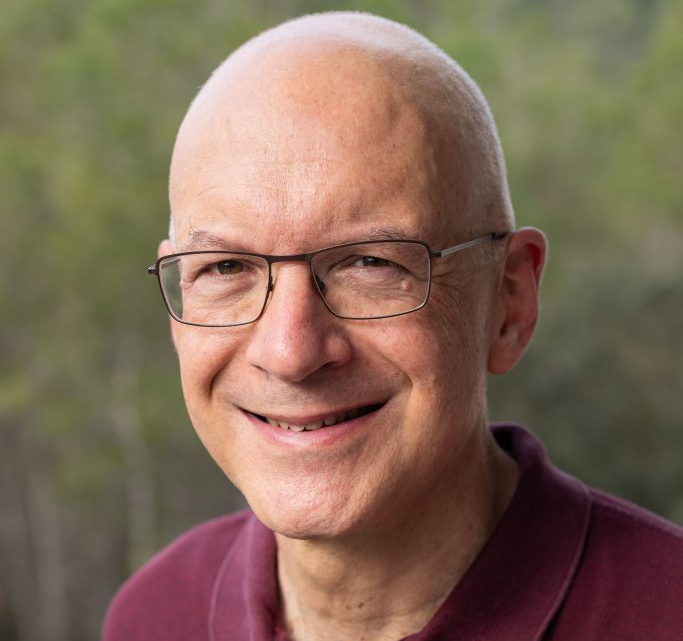

George Drettakis

Inria Université Côte d’Azur

Session chair: Michael Wimmer

The Quest for Easy Creation, Editing and Real-Time Rendering of Realistic 3D Scenes

In this talk we will present over 25 years of research motivated by the goal of providing solutions to easily create realistic 3D scenes by capturing real content, allowing subsequent editing -- most importantly re-lighting – and allowing real-time rendering of the resulting scenes. We look back at several early projects, and how they allowed us to advance our understanding of the fundamental difficulties of developing algorithms to achieve our goals by building on physics-based rendering and traditional graphics solutions. We will then stress the importance of being open to new tools and methodologies, most importantly deep learning. We will illustrate how adopting such techniques and methodologies early provided a significant advantage, both in relighting and real-time rendering for novel view synthesis, in part by building on our expertise in realistic rendering for training data generation. We will discuss the importance of efficiency and optimization even in early stages of these research projects, and finally discuss how the power of recent generative models provides exciting new possibilities, opening the way to powerful solutions to our overarching goals of easily creating, editing and rendering realistic 3D content.

George Drettakis graduated in Computer Science (CS) in Crete, Greece, obtained an M.Sc.and a Ph.D., (1994) in CS at the University of Toronto, Canada, under the supervision of Eugene Fiume, followed by an ERCIM postdoc in Grenoble, Barcelona and Bonn (94-95). He obtained an Inria researcher position in the iMAGIS group in Grenoble (1995), and the degree of "Habilitation" at the University of Grenoble (1999). In 2000 he founded the REVES research group at INRIA Sophia-Antipolis (2002-2015), followed by the current GRAPHDECO group. He has received several awards: the Eurographics (EG) Outstanding Technical Contributions award in 2007, EG Distinguished Career Award (2024), Inria-French Academy of Sciences Grand Prix (2024), the ACM SIGGRAPH Computer Graphics Achievement Award (2025), and was named EG (2007) and ACM Fellow (2026). He was papers co-chair of the EG Rendering Workshop in 1998, EG conference in 2002 and 2008, technical papers chair of SIGGRAPH Asia 2010, associate editor for major graphics journals, and chairs the EG working group on Rendering. His research spans many topics in computer graphics, with an emphasis on rendering. He initially concentrated on lighting and shadow computation and subsequently worked on 3D audio, perceptually-driven algorithms, virtual reality and 3D interaction. In recent years he has focused more on learning-based appearance capture, relighting and novel view synthesis (previously known as image-based rendering), culminating in the development of 3D Gaussian Splatting.

Jaakko Lehtinen

Aalto University / NVIDIA Research

Session chair: Belen Masia

Graphics' Final Frontier

Computer graphics has undergone an incredible journey from its (visually) humble beginnings into our current ability to simulate the appearance and motion of complex scenes to a degree often difficult to distinguish from reality. Yet closing the final gap to the look and feel of live action footage remains elusive. At the same time, modern purely data-driven methods routinely surpass the realism of traditional first-principles graphics approaches, but come with only coarse controls.In this talk, I'll draw on my experience of working with both classic and data-driven image generation techniques and attempt to outline a vision for the "endgame" of computer graphics that synthesizes the classic first-principles approaches with the power of data.

Jaakko is an associate professor at Aalto University and a distinguished research scientist at NVIDIA Research in Helsinki, Finland. He works on computer graphics and machine learning, with particular interests in generative modelling, realistic image synthesis, and appearance acquisition and reproduction. Overall, he's fascinated by the combination of machine learning techniques with physical simulators in the search for robust, interpretable AI. Prior to his current positions, Jaakko spent 2007-10 as a postdoc with Frédo Durand at MIT. Before his research career, he worked for the game developer Remedy Entertainment in 1996-2005 as a graphics programmer, and contributed significantly to the graphics technology behind the worldwide blockbuster hit games Max Payne (2001), Max Payne 2 (2003), and Alan Wake (2009).

Lourdes De Agapito Vicente

University College London / Synthesia Technologies

Session chair: Justus Thies

Learning to See the 3D World

Building algorithms that can emulate human 3D perception, using as input single images or video sequences taken with a consumer camera, proved to be a challenging task for years but has recently seen astounding progress. For decades, machine learning solutions faced the challenge of scarcity of 3D annotations, encouraging important advances in weak and self-supervision. However, recent efforts in large-scale paired image-3D dataset collection have led to a paradigm shift and fully supervised feed-forward large 3D reconstruction models have become a reality. In this talk I will describe progress in both static and dynamic 3D reconstruction, from early optimization-based solutions that captured sequence-specific 3D models, towards more powerful 3D-aware neural representations that can be trained from 2D image supervision only, to today’s large transformer-based, multi-view feed-forward models for metric-scale dense 3D reconstruction. I will also describe the successful commercial uptake of this technology and will show its application to AI-driven video synthesis.

Lourdes holds the position of Professor of 3D Vision at the Department of Computer Science, University College London (UCL) where she heads the Vision and Imaging Science Group. She received her BSc, MSc and PhD degrees from Universidad Complutense de Madrid (Spain). In 1997 she joined the Robotics Research Group at the University of Oxford as an EU Marie Curie Fellow. In 2001 she was appointed Lecturer at Queen Mary University of London, where she held an ERC Grant. Lourdes joined UCL in 2013 and was promoted to full professor in 2015. Her research in computer vision has consistently focused on the inference of 3D information from images or videos acquired with a single camera. Lourdes has served as Program Chair for CVPR 2016 and ICCV 2023, serves regularly as Area Chair for the top Computer Vision conferences (CVPR, ICCV, ECCV) and was Keynote speaker at ICRA 2017, ICLR 2021 and ECCV'24. Lourdes is co-founder of London-based startup Synthesia, the world’s largest AI video generation platform for business, currently valued at $4B. Synthesia's text-to-video technology allows users to create professional videos directly on the browser, removing the physical constraints of conventional production.

Bernd Bickel

ETH Zurich

Session chair: Amir Vaxman

Design in the Age of AI and Spatial Computing

As the boundaries between the digital and physical worlds blur, we face a profound opportunity to reimagine how we design the world around us. While advanced manufacturing, artificial intelligence, and spatial computing offer unprecedented potential for architecture, engineering, and art, their impact is often limited by a lack of design tools that can seamlessly bridge human creativity with physical realizability. In this talk, I will explore the transformation of design workflows from traditional CAD tools toward intelligent design systems. I will discuss how optimization-based design and tailored data-driven models enable novel approaches for interactive shape exploration and beyond, demonstrating their applicability to challenges ranging from intricate microstructures to high-performance building facades. A central theme is the control problem: the inherent tension between the probabilistic nature of modern generative AI and the high precision and editability required for professional engineering. I will conclude by reflecting on the evolving role of algorithms as creative partners. I will share a vision for a future where technology provides the "digital superpowers" that complement rather than replace human intuition, enabling us to build a more sustainable, functional, and resilient world.

Bernd Bickel is a Full Professor of Computational Design at ETH Zurich and a Research Scientist at Google. He previously served as a Professor and Vice President at ISTA and worked as a Research Scientist at Disney Research. He received his PhD in Computer Science from ETH Zurich in 2010. His research intersects visual computing, digital fabrication, and machine learning, focusing on computational tools that bridge digital design and physical manufacturing. His work includes high-fidelity performance capture, data-driven material modeling, functional metamaterials, and creative AI & generative design, integrating physics-based simulation with machine learning to create high-performance structures and systems. Bernd’s contributions have been recognized with a Technical Achievement Award from the Academy of Motion Picture Arts and Sciences (2019), the ACM SIGGRAPH Significant New Researcher Award (2017), an ERC Starting Grant (2016), and the ETH Medal (2011) for his doctoral dissertation.

Anatole Lécuyer

Inria Rennes/IRISA

Session chair: Ana Serrano

Shaping the future of our 3D immersion in digital worlds

Virtual reality (VR) naturally evokes a set of advanced technologies designed to immerse users in synthetic 3D worlds simulated in real-time by a computer. Through dedicated interfaces such as head‑mounted displays, VR applications enable powerful experiences, transporting users to imaginary places or allowing them to interact with virtual characters and remote people. The first VR systems date back to the 1960s, but today we are living through a pivotal moment for the field, as it steadily moves toward widespread, mass‑market adoption. In this talk, we will explore the next steps for VR technologies. We will first argue that VR is progressively introducing greater physical engagement into 3D human-computer interaction, for example through haptic technologies (tactile or force feedback) or through virtual embodiment via self‑avatars (anthropomorphic representations of the user within a virtual environment). We will also examine the ongoing convergence of VR with physiological and neural interfaces, pointing toward future interactive systems that directly leverage users’ cognitive states and open the door to even more compelling and holistic experiences. The talk will be illustrated with some of our latest scientific results, offering a glimpse of what could become.. the future of our 3D immersion in digital worlds.

Anatole Lécuyer is Director of Research, at Inria, the French National Institute for Research in Digital Science and Technology, based in Rennes. For more than 20 years, he has been conducting research in the field of virtual reality, exploring new ways of interacting with virtual worlds, such as haptic or neural interfaces. He is the co‑author of over 250 scientific publications and 15 patents. He serves as an expert for numerous organizations, including the French National Research Agency and the European Commission. He served as Associate Editor of IEEE Transactions on Visualization and Computer Graphics, and Presence journal. He was General Chair of the IEEE Virtual Reality Conference (2025), Program Chair of IEEE Virtual Reality Conference (2015-2016) and General Chair of IEEE Symposium on Mixed and Augmented Reality (2017). Anatole Lécuyer received the Inria–Académie des Sciences Young Researcher in Digital Science Award in 2013, the IEEE VGTC Technical Achievement Award in Virtual/Augmented Reality in 2019, and was inducted into the IEEE Virtual Reality Academy in 2022.

Björn Ommer

Ludwig Maximilian University of Munich

Session chair: Leif Kobbelt

Towards Efficient World Models for Visual Intelligence

Visual intelligence requires more than perception or the generation of plausible images or videos. It requires world models that represent the state of the world and how it changes. While recent progress in learning scene appearance from images and video has been remarkable, explicit models of kinematics are still vastly lacking: Current video models are largely computationally costly, focus on synthesizing only a single likely future, and paint future pixels rather than explicitly representing all possible motions that could lead there. In this talk, I will present recent progress toward efficient world models that make dynamics directly accessible, represent a multitude of possible futures, and allocate computation adaptively to the dynamic content of a scene rather than uniformly to individual pixels. Consequently, efficiency is not merely a matter of speed. It becomes a modeling principle that shapes what world models represent, how they reason, and what applications they make possible. The talk will then broaden the perspective and ask what happens when generative AI turns intelligence into a scalable, widely accessible commodity, propelling us from an information society toward a knowledge society with democratized access to actionable knowledge. Efficient world models, in this sense, are not simply compressed versions of larger systems, but a step toward visual intelligence grounded in dynamics, uncertainty, and efficient reasoning.

Björn Ommer is a full professor for Computer Science at LMU Munich where he leads the Computer Vision & Learning Group. Previously he was a full professor at Heidelberg University. After studying computer science and physics at the University of Bonn, he earned a Ph.D. from ETH Zurich, and held a postdoc position at UC Berkeley. He is LMU's Chief AI Officer, a director of the Bavarian AI Council, an ELLIS Fellow, and has served as an editor for IEEE T-PAMI and on the boards of numerous CVPR, ICCV, ECCV, and NeurIPS conferences. Björn's research interests are in generative AI, visual understanding, and explainable neural networks. His group developed several influential approaches in generative modeling, such as Stable Diffusion, which have seen broad adoption across academia, industry, and beyond and reflect his broader goal of advancing the democratization of generative AI.